For a system to be both effective and efficient the parts need to work in synergy. This requires both alignment and collaboration.

For a system to be both effective and efficient the parts need to work in synergy. This requires both alignment and collaboration.

Systems that involve people and processes can exhibit complex behaviour. The rules of engagement also change as individuals learn and evolve their beliefs and their behaviours.

The values and the vision should be more fixed. If the goalposts are obscure or oscillate then confusion and chaos is inevitable.

So why is collaborative alignment so difficult to achieve?

One factor has been mentioned. Lack of a common vision and a constant purpose.

Another factor is distrust of others. Our fear of exploitation, bullying, blame, and ridicule.

Distrust is a learned behaviour. Our natural inclination is trust. We have to learn distrust. We do this by copying trust-eroding behaviours that are displayed by our role models. So when leaders display these behaviours then we assume it is OK to behave that way too. And we dutifully emulate.

The most common trust eroding behaviour is called discounting. It is a passive-aggressive habit characterised by repeated acts of omission: Such as not replying to emails, not sharing information, not offering constructive feedback, not asking for other perspectives, and not challenging disrespectful behaviour.

There are many causal factors that lead to distrust … so there is no one-size-fits-all solution to dissolving it.

One factor is ineptitude.

This is the unwillingness to learn and to use available knowledge for improvement.

It is one of the many manifestations of incompetence. And it is an error of omission.

Whenever we are unable to solve a problem then we must always consider the possibility that we are inept. We do not tend to do that. Instead we prefer to jump to the conclusion that there is no solution or that the solution requires someone else doing something different. Not us.

The impossibility hypothesis is easy to disprove. If anyone has solved the problem, or a very similar one, and if they can provide evidence of what and how then the problem cannot be impossible to solve.

The someone-else’s-fault hypothesis is trickier because proving it requires us to influence others effectively. And that is not easy. So we tend to resort to easier but less effective methods … manipulation, blame, bullying and so on.

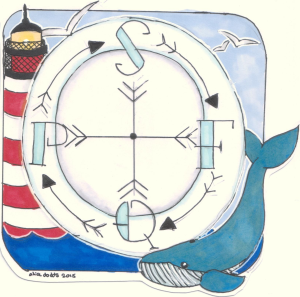

A useful way to view this dynamic is as a set of four concentric circles – with us at the centre.

The outermost circle is called the ‘Circle of Ignorance‘. The collection of all the things that we do not know we do not know.

Just inside that is the ‘Circle of Concern‘. These are things we know about but feel completely powerless to change. Such as the fact that the world turns and the sun rises and falls with predictable regularity.

Inside that is the ‘Circle of Influence‘ and it is a broad and continuous band – the further away the less influence we have; the nearer in the more we can do. This is the zone where most of the conflict and chaos arises.

The innermost is the ‘Circle of Control‘. This is where we can make changes if we so choose to. And this is where change starts and from where it spreads.

So if we want system-level improvements in safety, flow, quality and productivity (or cost) then we need to align these four circles. Or rather the gaps in them.

So if we want system-level improvements in safety, flow, quality and productivity (or cost) then we need to align these four circles. Or rather the gaps in them.

We start with the gaps in our circle of control. The things that we believe we cannot do … but when we try … we discover that we can (and always could).

With this new foundation of conscious competence we can start to build new relationships, develop trust and to better influence others in a win-win-win conversation.

And then we can collaborate to address our common concerns – the ones that require coherent effort. We can agree and achieve our common purpose, vision and goals.

And from there we will be able to explore the unknown opportunities that lie beyond. The ones we cannot see yet.